Back when I was in high school, I played Final Fantasy VII and IX (Yes, I skipped VIII). I was never able to finish either game but I remember thinking, “Man, the cutscenes are so awesome! Why can’t the gameplay look as awesome as the cutscenes?”

If you can’t relate to my experience (ok, I’m old), here’s something probably closer to yours: have you ever been hit by an ad for a game while scrolling through social media and have been blown away by the graphics? You think to yourself, “Wow, you can play this game on mobile? This looks like a console game!” You download it, play it, and find out that the gameplay looks nothing like the ad you saw.

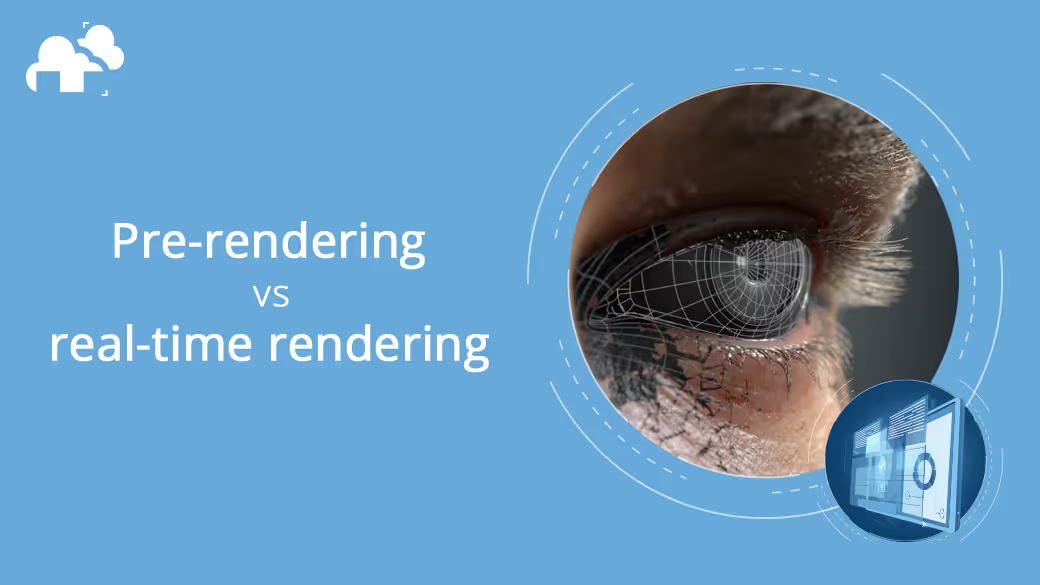

In an oversimplified nutshell, that my friend is the difference between pre-rendering and real-time rendering.

The simplest way to define rendering is this: it is the process of producing 2-dimensional images out of 3-dimensional data. This process involves solving gajillions of mathematical equations and therefore takes serious computational power. If those last two sentences confused you, here’s an article about the benefits of using a render farm and how render farms saves time to help you get a better handle on what rendering is.

To be a bit more specific, rendering is that final step in the production of 3D graphics where you “finalize” your project, in effect transporting it out from the environment of your 3D-making program and into a form that is easily displayable by any screen on which it may be viewed--laptop, mobile phone, TV, or movie screen. What do these screens have in common? They are all 2-dimensional. Hence, the need for processing. The need for rendering.

Pre-rendering, also known as offline rendering, is the type of rendering where the rendered image (or sequence of images, a.k.a animation) is displayed at a later time. What does this mean exactly? It means that you design your 3D image, make it as detailed and textured and photorealistic as you want it to. Then when you’re done, you let your CPU (or GPU, or both) process your 3D image into a 2D one, one that’s displaying on people’s screens. Depending on how complex and detailed you made your image (the more complex, the more calculations to be made), rendering will take some or a lot of time before you are able to display your image. Hence the term, pre-rendering.

This is the type of rendering performed by our render farm. In a nutshell, because you want to cut down the time it takes to render your image, you send your project to a render farm via the internet, buy time and access on their servers, and let their uber-powerful processors do the rendering for you. Typically, a project that will take hours to render on your personal machine will take mere minutes or seconds on a render farm.

On the other hand, real-time rendering is the same process (converting 3D information into a 2D image) done in a much, much faster timeframe. As the name implies, this type of rendering produces an image practically instantaneously from the moment data is inputted. To be more precise, a typical setting in a real-time rendering engine like Unity is 30 to 60 frames per second. This means that the engine is able to process and display 30 to 60 2D images from 3D information in one second. Try to wrap your brain around that for a minute. That is light-years away from a single image output of minutes or hours of pre-rendering.

But is pure speed the right way to look at pre-rendering and real-time rendering? The short answer is no.

Now for the long answer.

Speed is always desirable in rendering, whether it’s pre-rendering or real-time. But depending on your purpose, how you regard speed or what you consider fast will vary. You can say that when it comes to rendering, speed is relative.

If for example you are an architectural visualization artist dealing with photorealistic high-resolution representations of a building-in-progress, render speeds of multiple hours or even days are common if you are using just your own machine to render. So the ability to reduce, say, a 2-hour render to 20 minutes on a render farm is a huge advantage.

But if you are a game designer, taking 20 minutes to render a single-image is absolutely unacceptable because this means that if the user turns the in-game character’s head even slightly, it would take 20 minutes for the change in perspective to register on-screen. Forget about creating a game if this is the case. This is called latency, and latency is a major buzzkill when it comes to gaming. A 20-minute latency is an exaggerated example. These days, real-time render speeds are usually at 30-60 frames per second. Animation (which is what video games primarily make use of) is nothing but a series of still images shown rapidly such that it creates the illusion of movement. Cinema has taught us that the human eye perceives natural motion at 24 frames per second. So for your animation to seem and feel natural for the viewer, the series of images would have to be displayed on screen at a rate of 24 frames per second or above.

Now, for real-time rendering, what can cause the frame rate to slow down? Image complexity. If the graphics are too detailed, it takes the processor longer to compute all the necessary calculations and display the images on your screen. If the frames are displayed at a rate that’s too slow, the responsiveness of the game controls suffer. This is where the trade-off between immersive graphics and smooth gameplay lies. And the biggest factor when it comes to 3D graphics? Lighting.

When it comes to making 3D graphics look realistic, you have to understand the art of light. Everything that our eye perceives is reflected light, and different objects, materials, and textures reflect light differently. And since 3D graphics are actually just mathematical information represented on your screen as colors, achieving realistic lighting relies on how well and how efficiently your computer calculates the way light behaves within a particular scene. Pre-rendering and real-time rendering handle light differently.

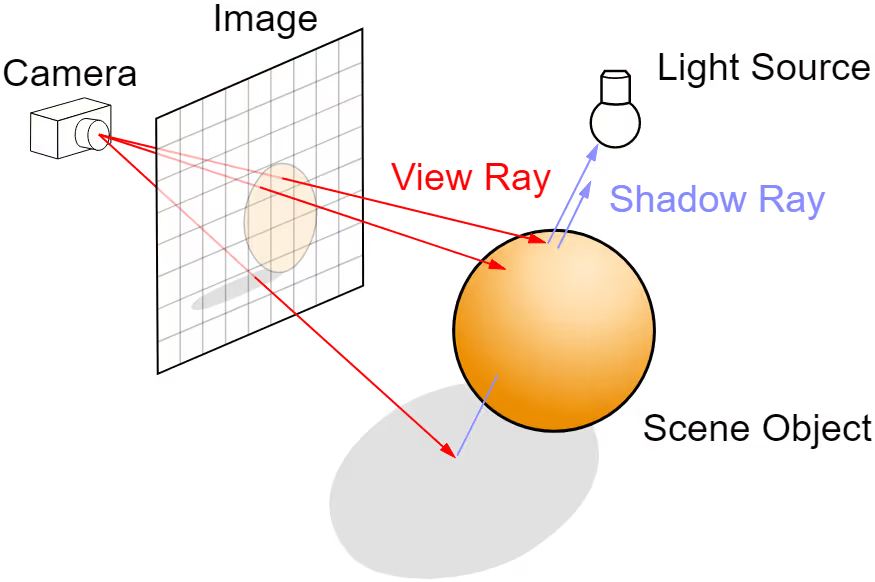

Generally speaking, pre-rendering handles light using a technique called ray tracing. Simply put, ray tracing is the same technique that our eyes use to perceive objects around us: a light ray coming from a source hits an object, bounces off of it, then hits our eyes. If you trace every single ray of light that reaches your eyes back to the object then back to the light source, that’s ray tracing. This technique yields the most photorealistic results.

Real-time rendering, again generally speaking, uses a technique called rasterization. Without getting too technical, this technique translates geometric information from the 3D models forming the scene and into pixels that are displayed on a 2D screen. This is a faster process than ray tracing that’s why this is the technique often used in real-time rendering, where the system needs to generate an image 30-60 times in one second.

If rasterization is faster, shouldn’t you just take this route every time you render? Well, the speed that rasterization brings comes with a trade-off: you lose out on some realism because rasterized renders generally don’t take into account the bouncing of indirect light off of surfaces. Pre-rendering handles this well but as we have talked about, it takes more time to do so. In rasterized renders, the illusion of natural light is often achieved by techniques like baking. Lighting, which is the crux of the matter when it comes to CG-realism, is processed more sophisticatedly by pre-rendering than in real-time rendering.

However, hardware and software advancements have started to blur the lines between this neat delineation between ray tracing and rasterization, between pre-rendering vs real-time rendering.

NVIDIA’s RTX series of GPUs allowed ray tracing to be used in real-time rendering for games. This resulted in more jaw-dropping, eye-widening in-game graphics. Hardware improvements like this gave rise to software like Unreal Engine that allows game creators to design games that are at par with cinema-level visuals. In fact, Unity (another real-time rendering engine) has put out short films created entirely using real-time rendering to showcase how far real-time rendering has come in terms of photorealism. Check out Adam and The Heretic. However, similar to the earlier discussion, pre-rendering still reigns when it comes to organic surfaces. There’s simply too much complexity and therefore too many calculations to deal with when it comes to how light interacts with organic surfaces.

On the other hand, pre-rendering continues to evolve its efficiencies to lessen the time it takes to output stunning 3D images, both via software and hardware innovation.

These are exciting times for 3D rendering, be it pre-rendering or real-time rendering. More and more, limits continue to be pushed, barriers broken, and ceilings smashed. This means production costs will go down as visual excellence goes up.

As I said, exciting times.

Register Now and Get $50 FREE Credits!